Technical SEO is type of SEO. SEO, or search engine optimization, is a type of digital marketing that aims to improve a website’s ranking in the unpaid or organic search results portion of Google’s SERPs (search engine results page) for key search terms.

It is divided into three types: on-page (content optimization within a site), off-page (creating high-quality external links to a site), and technological SEO.

While we’ll go over technical SEO in more detail in the following sections, it’s important to remember that as a website owner, publisher, or developer, you’ll need all three types of SEO to help you get more web traffic, generate leads, and have repeat visitors who will grow loyal to your brand/business over time.

Continue reading to learn more about technical SEO and how to implement a technical SEO checklist for your website.

What is Technical SEO?

Technical SEO is used to better a website’s infrastructure so that search engine bots can crawl and index the pages on your website more effectively.

Technical SEO is crucial since it focuses on having a website that is technically strong, well-planned, and ingeniously laid up for its customers to browse. As a result, the site will gain a high reputation, and Google will eventually pick up on ranking signals such as user comments, reviews, time spent on the site, and other factors to give it a favorable search rating.

Why is Technical SEO Important?

Now that the question of “what is technical SEO?” has been answered, it is critical to comprehend its significance and purpose. A website’s technical SEO guarantees that it is well-structured and loaded quickly. Such websites are more likely to be ranked higher by search engines than those that are slow and full of deadlinks.

Search engines evaluate a website based on the experiences of its users. They keep track of which websites provided them with organised and timely information. Developers who construct and strengthen websites with the technical SEO checklist in mind make them more safe, quick, and user-friendly.

This raises the user retention rate and encourages search engines to boost SEO rankings. As a result, it’s critical to establish a solid technical SEO foundation for your website.

Why should you optimize your site technically?

Here are five reasons why you should devote time and resources to improving the technical aspects of your website:

- To make it easier for search engines to explore, map, and understand your site. This aids in the more accurate ranking of your website.

- To increase user attention and retention.

- To outperform competitors’ webpages in search results. As content SEO becomes more popular and accessible, technical optimization of your website will provide you an advantage over your competitors.

- To make your website mobile-friendly, secure, and responsive. This has a big impact on the website’s SEO rating on Google.

- Reduce data redundancy and improve sitemap classification to improve your website’s technical performance. Both users and search engines benefit from this. It’s a win-win situation for everyone!

Top 15 Advance Technical SEO Checklist

It’s not uncommon to feel overwhelmed by the sheer amount of work required to optimise your website. Simply go over the following technical SEO audit checklist to improve your site’s user experience and improve its ranking in Google’s organic search results. Here is a list of the top ten technical SEO checklists you should follow to make your website SEO-friendly:

- HTTPS version

- Mobile-friendly website

- Implementation of structured data markup

- Site speed

- Optimize your crawl budget

- Optimum XML sitemap

- AMP implementation

- Avoid 404 pages

- Canonicalization

- Name your preferred domain

- Breadcrumb Menu

- Javascript

- Pagination

- Avoid Duplicate and Thin Content

- Hreflang for International Website

You may also get in touch with us if you need Technical SEO Audit Services.

HTTPS Version – A secure site is everything

Until 2014, SSL (Secure Sockets Layer) software was only used by online shopping or e-commerce websites to provide a safe and secure environment for transactions. However, Google declared in 2014 that all websites would have to implement the technology if they desired a higher organic search rating on its SERPs, and thus one of the most crucial Google ranking criteria was born.

It began showing the ‘not secure’ tag in 2018 for websites that did not comply with its directive and still maintained the ‘http://’ tag in their URLs. To avoid this, all you have to do as a website owner is make sure your website is secured with an SSL certificate.

This establishes a secure and encrypted connection between your web server and a browser, resulting in the display of the ‘https://’ tag rather than the ‘http://’ tag. Why is HTTPS crucial for your website? Here’s a video from Google Chrome Developer.

Mobile-Friendly Website

In 2018, Google revealed that it would index sites first on mobile devices. This means that the search engine evaluates web pages from the standpoint of a mobile device (such as a smartphone or tablet) and determines how responsive they are. Analyze your Google Search Console data to see how you rank in this category at any time.

Keep in mind that your mobile site should contain the same material as the desktop version. Another key optimization method is to eliminate annoying pop-ups.

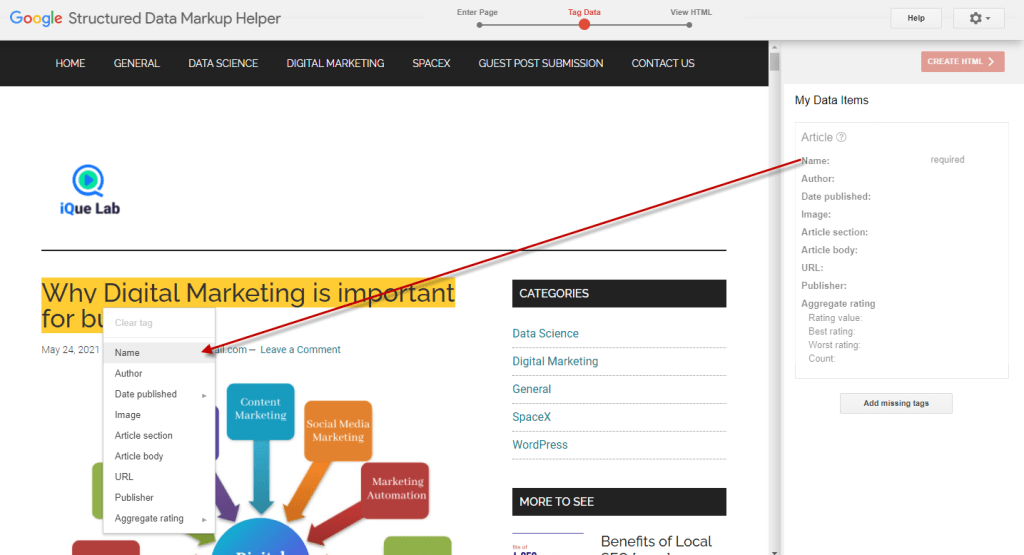

Implement Structured Data Markup

Structured data mark-up aids search engines in better understanding and reading your web page, whether it’s a recipe, book, how-to lesson, or other type of content. Set it up with Google’s Structured Data Markup Helper, then test it with its Structured Data Testing Tool.

But, before you do anything else, go to schema.org and figure out which schemas are appropriate for your site’s content, then assign them to different URLs. This will help you receive visually enhanced rich results on Google’s SERPs, which will attract more people’ attention. Here’s what Google’s Matt Cutts has to say about Structured Data Markup Implementation.

MUST READ: Schema Markup for SEO: The Complete Guide

Site Speed Matters

Although Google has long prioritised the speed with which your desktop site loads, it declared in 2018 that the speed with which your mobile site loads is also a major ranking consideration. Furthermore, a slow website can cause customers to lose interest in learning more about you or shopping on your site, resulting in a high bounce rate.

You may use Google’s PageSpeed Insights tool to assess how you’re doing in this category, but you can also employ a few tricks to help things move faster.

These include having a caching mechanism in place, using a fast hosting and DNS (domain name system) provider, compressing sites with technologies like GZIP, having responsive pictures that use vector formats, and so on.

Optimize Your Crawl Budget

A crawl budget is the number of times the Google bot visits your site to crawl and index it over a set period of time. As the owner of a website, you must make sure that no crawl cycle/unit of your crawl budget is ever squandered. To communicate with search engine crawlers, websites use the robots.txt standard.

You must check your site’s robots.txt file to ensure that it does not block any vital resources unnecessarily (like those of JavaScript for e.g.). If it does, your site will be crawled only partially. It’s also important to make sure there are no orphan pages on your site, which are pages that aren’t connected to anything else.

Other indicators Google looks for when ranking your site include keeping pages approximately three clicks away from the homepage, or having a shallow click depth, keeping your links contextual and interlinking to pages with comparable content, and using keywords in the anchor text of internal links. To learn more about website crawling, watch this video from Google’s Matt Cutts.

Optimum XML sitemap

An XML sitemap contains relevant information about your site, such as the most recent modifications to a page’s content, the importance of that page in relation to other pages on your site, and so on. An XML sitemap, as the name implies, gives a web crawler a blueprint of your site and instructs it on how to browse it.

While you can use a sitemap generator to produce one for your site, you need also submit your XML sitemap to Google Search Console so that it can be properly crawled and indexed. Remove banned URLs, unnecessary redirects, and pages with no SEO value, such as author bios, privacy policies, and so on, as well as broken pages and long redirects.

A W3C validator can be used to check for coding mistakes.

AMP Implementation

AMP (Accelerated Mobile Pages) is a stripped-down form of HTML that improves a mobile website’s speed and functionality. AMP operates by turning off scripts, forms, and comments, among other things. AMP can increase your CTR (click-through rate) and the amount of backlinks to your site if applied appropriately.

Google even includes AMP pages in crucial search result carousels, causing users to pay more attention to them. However, keep in mind that AMP isn’t a replacement for a mobile-friendly website.

Avoid 404 Pages

A 404 status code is the ideal option if a page no longer exists or if a URL has been changed by you. If you’re using WordPress or another content publishing platform, make sure your 404 page is search engine optimised by having a structure that matches the rest of your site, providing users with options for other similar pages to visit instead, and making it simple to return to where they came from, among other things.

This manner, a web crawler will have an easier time indexing and crawling your site while going through it without getting confused.

Canonicalization

When it comes to keeping excellent site hygiene, duplicate site material is a big no-no. A canonical URL tells Google which version of a web page it should index and crawl. You may accomplish this by simply utilising the rel=”canonical” keyword in your website code.

For all of your site’s pages, it’s a good idea to define a preferred canonical URL. To avoid duplication in the first place, you can restrict your CMS (content management system, such as WordPress) from publishing several versions of the same content. Here’s what Matt Cutts of Google had to say about Canonical.

More information regarding Duplicate material may be found here.

Noindex Tag and Category Pages

When a page has a ‘noindex’ tag, search engines are told to stop indexing and tracking the information and links on it. Developers frequently utilise this to point Google crawlers to their most important and prioritised pages. This tag can be used on archive or category pages while working on improving various technical SEO variables.

Name your preferred domain

You can get to a website by putting https://www.abc.com or https://abc.com into the address bar (sans the www). While people may do this on the spur of the moment without giving it much consideration, it may cause search engines to become confused, resulting in indexing and page rank concerns. As a result, you must tell Google which version you prefer.

It’s worth noting that there’s no benefit to choosing one over the other; but, once you’ve decided on a domain name, you must keep to it; otherwise, there will be issues during site migration via a 301 redirect. To select a preferred domain name with Google, sign up for Google Webmaster Tools, validate all of your site’s versions, and then choose the preferred one under “Site Settings.”

Breadcrumb Menus

Breadcrumbs are an important structural component of a technical SEO checklist. It’s also known as a “breadcrumb trail,” and it’s a sort of navigation that reveals the user’s location. It’s a type of internet navigation that greatly improves a visitor’s orientational awareness. Breadcrumbs clearly depict the website hierarchy and indicate the present location of a user.

It also reduces the amount of steps required for a user to return to the homepage, a different area, or a higher-level page. Breadcrumbs are commonly utilised by websites with multiple sections that require a logical organisation. As a result, it is an excellent choice for e-commerce websites.

JavaScript

Making JavaScript-heavy websites search-friendly is one of the technical SEO foundations. It is carried out by an SEO agency in order to make the respective websites more visible and rank higher in search engines. For debugging JavaScript SEO difficulties, Google resources like the Mobile-Friendly Test, URL Inspection Tool within Google Search Console, and the Rich Results Test are crucial.

Crawling, rendering, and indexing are the three key aspects of the JavaScript web app development process.

Pagination

It’s a method of dividing content across a number of pages. Pagination, an important part of technical SEO, is used to organise a list of products or content into a format that may be consumed. Pagination is used in websites such as news publishers, ecommerce, blogs, and forums.

When employing pagination, you may run across duplicate content difficulties. Rel=“next” and rel=“prev” links can be used to avoid such situations and to integrate links and page rank into the main page. This is done to let search engines know that the pages after the main page are continuations of the main page.

Google will recognise the primary page and use it for indexing purposes after discovering the appropriate links in the code.

Avoid duplicate or thin content

One of the most important aspects of understanding technical SEO is thin content. The ranking signals uncovered from the data given by Google can be used to find it. Instead of employing mass-produced content, it is recommended that websites use higher quality content prepared by specialists to avoid instances of thin content.

Before sending the downloaded HTML for rendering, duplicate content can be eliminated. In the HTML response for app shell models, there may be less text and code. When the same visible code appears on different websites, it results in duplicate pages that may not render instantly.

With enough time, the issue should be resolved. However, with newer websites, this may become a problem. Self-referencing canonical link components are useful at this point since they assist avoid duplicate content issues and signal the original page or page one that we want to rank in the search engines.

Extra Technical SEO Tips

Check Your Site for Dead Links

Broken links (non-responsive pages) and dead links (pages that have been deleted/moved) might potentially impact your website’s SEO. There are two reasons for this: first, Google crawlers waste time on these links without obtaining useful information. Two, consumers browse and exit these links too soon, producing a negative impression of the page on the search engine.

As a result, it’s critical to do regular website audits, discover dead or broken connections, and optimise your site as much as feasible. Google Search Console is one of the easiest ways to find these links (GSC).

Hreflang for International Websites

Hreflang tags are used to specify which country and language a page is intended for. It also eliminates the possibility of duplication content. When a website targets multiple countries with the same spoken language, search engines require some assistance in determining which country or language is being targeted. As a result, in the search results, Hreflang displays the appropriate website for the searched area.

Conclusion

Any business or brand owner should devote valuable time to getting the technical SEO of their website perfect, as the rewards far exceed the initial difficulty they may have in comprehending the principles and implementing the tactics.

On the plus side, once done correctly, you won’t have to worry about it again, with the exception of the odd site health audit. Check out our blogs for the most up-to-date information about SEO and other topics.